|

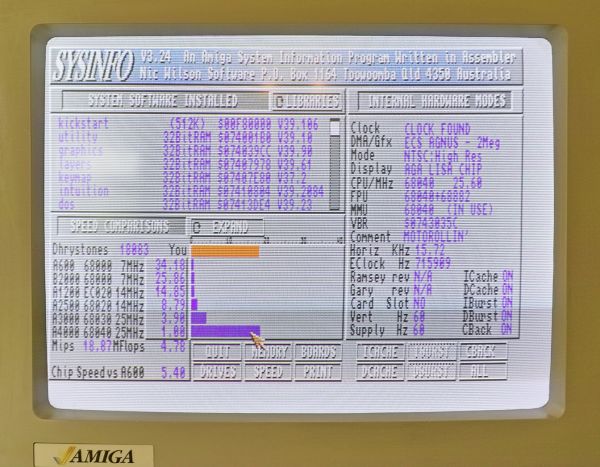

Many computers of that era use a multiple of the chroma clock as the pixel clock (the Amiga, for example, uses 4x chroma, or 14318181.818Hz). roughly 3579545.4545Hz and the color sub-carrier frequency (also called the chroma clock) was defined as 227.5 times the horizontal scan rate. When color video was introduced in the USA, the horizontal scan rate was set as precisely 15750 * (1000/1001)Hz, i.e. All this was coded in assembler of course, with painstaking cycle-counting to make sure the operations could happen in the requisite time, given the cycles ANTIC would steal for the video mode you requested. All the computational work of playing the game happened after it got to a low-attention area like the bottom scoreboard, and the vertical blank period. In a lot of Atari games, the CPU "rode the raster", spending its very limited CPU cycles to prepare changes to colors or sprites that would happen on the next scan line, wait for horizontal-blank, execute on cue, and prepare the next. This meant available CPU power varied between display modes and whether you were in vertical blank (a significant amount of time when the raster was off the visible screen). On the Atari, the far more sophisticated ANTIC system interrupted the CPU to take the memory cycles that video needed, as well as dynamic RAM refresh. ** To digress on pre-Amiga-age dual-porting: On the Apple II, the video system got every other RAM cycle whether it needed it or not (hence running half the speed of the Atari), and in classic Wozniak style, he rearranged the memory mapping of video so the video system would also do dynamic RAM refresh). No longer needing the thing, it was kind of a lousy standard. that we were so pious in our worship of the NTSC standard.

It's difficult to imagine, in this day and age of 4k video, gigabytes of video RAM, and VPUs that can mine Bitcoin faster than the CPU. This allowed them to leverage all the color-computer design which had come before, rather than having to reinvent the wheel. The Amiga, the last of the machines built with the "color computer" mindset (heh, speaking of another), deliberately chose a CPU speed again divided down from that same familiar crystal, and again lockstepped to the NTSC color clock. The Apple II even used the video system to accomplish dynamic memory refresh. This was dual-ported in the simplest possible scheme**, which required memory clocks be in lock sync with the video system.

Why didn't these color-computers just operate asynchronously and run the CPU at max spec, while the video system operated on color-clock? Because memory was at a premium in those days, so they used memory-mapped video. And this "kept the door open" to a future "color computer" design with shared video RAM which came to fruition as the IBM PCjr. (But keep in mind memory clock is 1/4 of that, so memory throughput was crystal/12, the same as the Atari 2600 - ha!) Why choose a multiple when video cards had dedicated video RAM and ran on their own clocks? Despite staggering chip-fab capability, the IBM PC team was pathological about using off-the-shelf designs and components.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed